Research

The last door to unlimited performance: XDP in Solana Validator Client

Enabling XDP in the Agave client exponentially increases the scalability needed to surpass the magic number of one million transactions per second—and beyond.

Introduction

In blockchain networks like Solana, where validators must handle vast streams of data to achieve consensus on thousands of transactions per second, the interplay between software and hardware becomes a defining factor in performance. Solana's design prioritizes raw throughput, propagating data shreds across a global network of peers via its Turbine protocol. Yet, as block sizes grow to accommodate more compute units (CUs), traditional networking paths in the Linux kernel introduce inefficiencies that can stall progress. The eXpress Data Path (XDP) emerges as a key optimization, allowing early packet intervention directly at the network interface card (NIC) level. This article explores XDP's role in Solana's ecosystem, detailing kernel-NIC dynamics with and without it, its integration in the Agave client, scalability implications and recent advancements in supporting overlays like DoubleZero.

Historical Context: Solana's Networking Evolution

Solana's journey toward high-performance networking began with its proof-of-history consensus, which decouples timekeeping from block production to enable parallel processing. Early validators relied on standard UDP sockets, but as the network scaled from testnets to mainnet in 2020, bottlenecks in packet handling became apparent. Turbine, the protocol used to spread blocks within the network, fragments them into shreds for fan-out dissemination. However kernel overheads, such as syscall latency and buffer copies, limited outbound rates to tens of thousands of packets per second.

By 2023, Anza (formerly Solana Labs' engineering arm) identified Turbine as a core limiter for larger blocks. Initial optimizations focused on QUIC for reliable transport, but deeper kernel bypass was needed. XDP, introduced in Linux 4.8, offered a path forward by executing eBPF programs in the driver context. Solana's adoption accelerated in 2025, coinciding with Firedancer's emergence from Jump Crypto. This evolution reflects a broader trend in distributed systems, where projects like Ethereum's Danksharding or Celestia's data availability layers grapple with similar propagation challenges, but Solana's model amplifies the need for sub-millisecond efficiencies given their extreme emphasis on maximizing performance.

Traditional Kernel-NIC Interactions in Linux

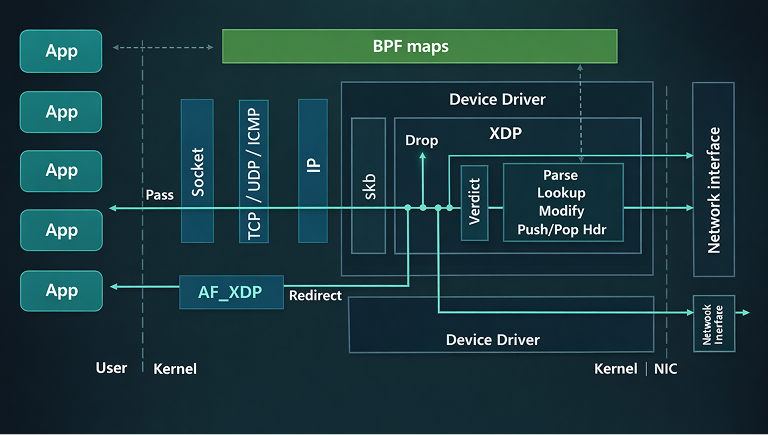

The Linux kernel manages network traffic through a structured pipeline that begins at the hardware level. When a NIC receives packets, it uses direct memory access (DMA) to transfer data into ring buffers in kernel memory. An interrupt notifies the kernel, triggering the network stack to process the packets. This involves several layers: the device driver handles initial reception, followed by protocol processing in the IP and transport layers, before delivery to user-space applications via sockets.

This pathway introduces overhead from context switches between kernel and user space, data copies, and queue management. For high-volume UDP traffic, common in peer-to-peer systems, the kernel's softirq mechanism distributes processing across CPUs using Network API (NAPI) polling. However, under sustained loads, such as those in blockchain validators, these operations can consume significant CPU cycles, leading to bottlenecks in packet ingestion and dispatch.

In Solana, validators exchange shreds (fragments of blocks) via Turbine, a gossip-based propagation mechanism. Without optimizations, packets traverse the full kernel stack, resulting in latencies that compound during peak activity. Measurements from production environments show that kernel overhead can account for up to 30% of processing time in validators handling 150,000 packets per second.

XDP-Enhanced Kernel-NIC Dynamics

XDP introduces a hook in the kernel's receive path, allowing eBPF (extended Berkeley Packet Filter) programs to execute directly in the driver context after DMA but before full stack processing. This enables decisions to pass, drop, or redirect packets with minimal overhead. For transmission, XDP supports zero-copy sending via AF_XDP sockets, bypassing traditional send paths.

With XDP, packets are inspected in-place within the driver's ring buffer, avoiding allocations and copies. If a packet matches application criteria, it can be forwarded to user space efficiently; otherwise, it's dropped early, conserving resources. In benchmarks, XDP reduces per-packet CPU usage by factors of 10 to 100 compared to standard sockets, particularly for UDP workloads.

In Solana's context, XDP transforms Turbine operations. Validators can ingest shreds directly from the NIC, filter irrelevant traffic, and dispatch responses without kernel mediation. This is especially beneficial for leaders, who fan out shreds to hundreds of peers. This aligns with IBRL (Increased Bandwidth, Reduced Latency) goals, preparing for sub-400ms slots..

XDP in the Solana Agave Client

The Agave client, developed by Anza in Rust, serves as Solana's primary validator software. Prior to XDP integration, Agave relied on QUIC over UDP for Turbine, using the kernel's networking stack. Packets were received via user-space sockets, processed in threads, and sent back through similar channels. This approach scaled to current mainnet loads but struggled with projected increases, such as 100 million compute units (CUs) per block. Turbine dispatch became a chokepoint, with CPU saturation limiting outbound rates to around 150,000 packets per second.

XDP integration, introduced in Agave v2.3.8 and refined in v3.0, rewrites Turbine to leverage kernel bypass. Incoming shreds are handled by an eBPF program attached to the NIC, which maps packets to user-space buffers via AF_XDP. Outbound shreds use XDP_TX for direct transmission, eliminating syscalls and copies. This results in dispatch rates exceeding 2 million packets per second on commodity hardware, a 100-fold improvement.

Without XDP, Agave's performance plateaus under high fan-out, as seen in load tests where 12 cores were needed for shred propagation. With XDP, a single core suffices, allowing reallocation to banking threads for transaction verification. Alessandro Decina, lead of Anza's performance team, noted in a presentation that this shift enables 100M and beyond CU blocks, providing headroom for network upgrades. Internal traces show IOPS and scheduler contention reduced by 40%, aligning with broader optimizations in Agave v3.1.

XDP's Absence in Early Agave Configurations

In configurations without XDP, Agave falls back to legacy QUIC handling, which is viable for current 60M CU blocks but inadequate for scaling. Packet processing involves repeated kernel traversals, leading to jitter and dropped shreds during congestion. Validators experience higher skip rates, as shreds arrive too late for consensus. This was evident in specific moments of extreme network usage, where network load causes forking.

The transition to XDP is flagged via command-line options, requiring NIC drivers with native support (e.g., mlx5 or ice). Without it, scalability is capped, underscoring XDP as a prerequisite for Solana's growth.

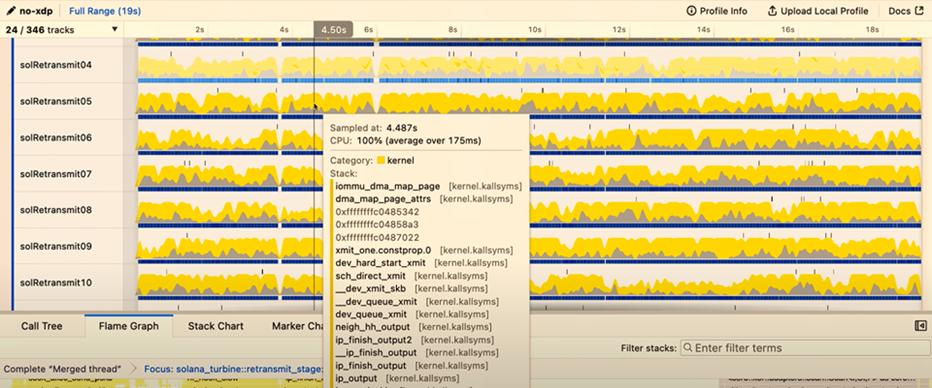

In the image below you can appreciate how even with several cores dedicated to replay, generate a big overhead of tasks that degrades the performance.

Image from Accelerate 2025, shared by Alessandro Decina

XDP as a Scalability Enabler in Solana

Solana's architecture prioritizes single-shard throughput, with Turbine central to block propagation. As block sizes grow, outbound traffic scales quadratically with peer count, straining conventional networking. XDP unblocks this by minimizing per-packet costs, allowing validators to handle larger shreds without proportional CPU increases.

Decina's analyses highlight Turbine as the primary bottleneck for 100M+ CU blocks. Without XDP, dispatch overhead limits effective throughput to 50-60M CUs; with it, tests achieve 100M CUs sustainably. This correlates with reduced latency in gossip protocols, improving fork resolution and overall finality. Anza's data from Breakpoint 2025 shows XDP-enabled validators propagating blocks 2-3x faster, directly impacting scalability. Broader implications include lower fees during congestion and support for high-frequency applications like decentralized exchanges.

Insights from Anza developers, including Decina, emphasize empirical testing. In a RustConf talk, he described XDP as akin to audio ring buffers, enabling out-of-order packet handling with minimal overhead. This draws from his GStreamer background, where millisecond savings were critical—parallels that inform Solana's sub-second goals.

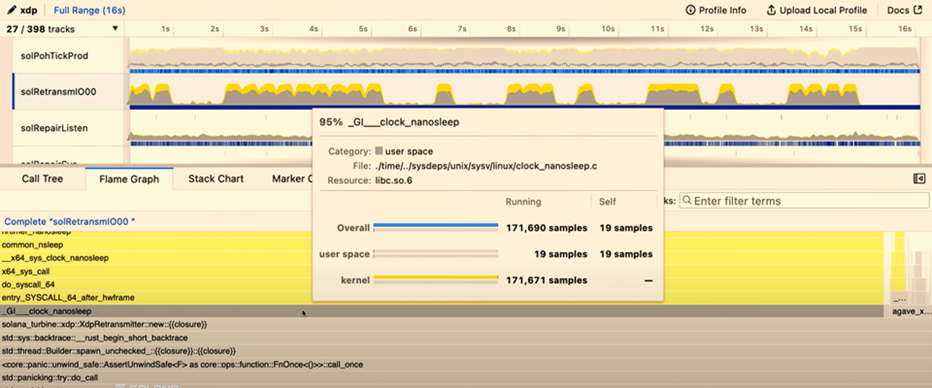

In the image below you can appreciate how with a single core enabled, the CPU is sleeping 95% of the time, demonstrating the exponential difference compared to have it disabled.

Image from Accelerate 2025, shared by Alessandro Decina

Firedancer's XDP Implementation in C

Firedancer, built by Jump Crypto in C, represents an independent validator client emphasizing hardware efficiency. From inception, it incorporated XDP for networking, using a tile-based architecture where dedicated components (tiles) manage tasks like packet ingestion.

The networking tile leverages XDP for kernel bypass, accessing packets directly from the NIC via io_uring and zero-copy buffers. This achieves over 1 million transactions per second in tests, far surpassing Agave's baseline. Firedancer's C implementation allows fine-grained control over memory and threading, with tiles running in isolated contexts to minimize contention. QUIC is customized, intercepting packets pre-kernel for low-latency processing.

A hybrid deployment grafts Firedancer's XDP components onto Agave's consensus layer, enabling incremental rollout. Full Firedancer targets 1M TPS on commodity hardware, using AVX-512 for signature verification alongside XDP.

Contrasting Implementations: Firedancer vs. Agave

Firedancer's C codebase prioritizes raw performance, with XDP integrated deeply into tiles for parallelism. Agave, in Rust, focuses on safety and modularity, adding XDP as an enhancement to existing QUIC stacks. Differences include:

- Language Trade-offs: C enables direct hardware access but risks errors; Rust's ownership model prevents races but adds overhead in high-throughput paths.

- Architecture: Firedancer's tiles isolate functions, allowing independent scaling. Agave uses threaded pools, which XDP optimizes but doesn't fully modularize.

- Maturity: Firedancer, live on mainnet since late 2025, emphasizes kernel bypass from day one. Agave adopted XDP later, in response to scaling needs.

- Performance Metrics: Firedancer hits 1M TPS targets; Agave with XDP reaches 100M CUs but requires further tuning for equivalent rates.

These divergences promote client diversity, reducing network-wide risks from bugs.

XDP over DoubleZero: Addressing GRE Tunnel Support

DoubleZero provides a private fiber underlay for Solana, routing consensus traffic via GRE tunnels to reduce latency. Early Agave XDP lacked support for virtual interfaces like GRE, as XDP hooks attach to physical NICs. This prevented integration, forcing validators to choose between XDP efficiency and DoubleZero's bandwidth.

Anza resolved this by extending XDP to bridged or tunneled interfaces, enabling attachment over GRE. Decina announced support for IBRL mode on DoubleZero, combining XDP's speed with the underlay's low jitter. Now, validators tunnel to DoubleZero exchanges, where XDP processes packets on the private backbone. This hybrid yields sub-10ms round-trips across continents, unblocking scalability in geo-distributed setups.

Conclusion

XDP's integration into Solana clients exemplifies how kernel optimizations can elevate distributed system performance. By streamlining kernel-NIC interactions, it enables validators to scale Turbine efficiently, addressing bottlenecks in block propagation. Agave's Rust-based approach and Firedancer's C implementation offer complementary paths, with DoubleZero support enhancing real-world deployment. As Solana pursues 100M CU blocks and beyond, XDP stands as a foundational enabler, drawing from rigorous engineering to meet the demands of high-throughput networks. Future work may explore hardware offloads, further blurring the line between software and silicon for even greater efficiency.

Contributors

Oscar GarciaFounder & CEO